This is a reblog of my “building a home NAS server” series on my old blog. The server still exists, still works but I’m about to embark on an overhaul so I wanted to consolidate all the articles on the same blog.

Unfortunately the excitement from seeing OpenSolaris’s disk performance died down pretty quickly when I noticed that putting some decent load on the network interface resulted in the network card locking up after a little while. I guess that’s what I get for using the on-board Realtek instead of opening the wallet a little further and buy an Intel PCI-E network card. That said, the lock-up was specific to OpenSolaris - neither Ubuntu nor FreeBSD exhibited this sort of behaviour. I could get OpenSolaris to lock up the network interface reproducibly while rsyncing from my old server.

This gave me the opportunity to try the third option I was considering, Ubuntu server. Again, this is the latest release with the latest kernel update installed. The four 1TB drives were configured as a RAID5 array using mdadm. Once the array had been rebuilt, I ran the same iozone test on it (basically just iozone -a with Excel-compatible output). To my surprise this was even slower than FreeBSD/zfs even thought the rsync felt faster. Odd that.

Here a few pretty graphs that show the results of the iozone write test - reading data was faster, as expected, but the critical bit for me is writing as the server does host all the backups for the various other PCs around here and also gets to serve rather large files.

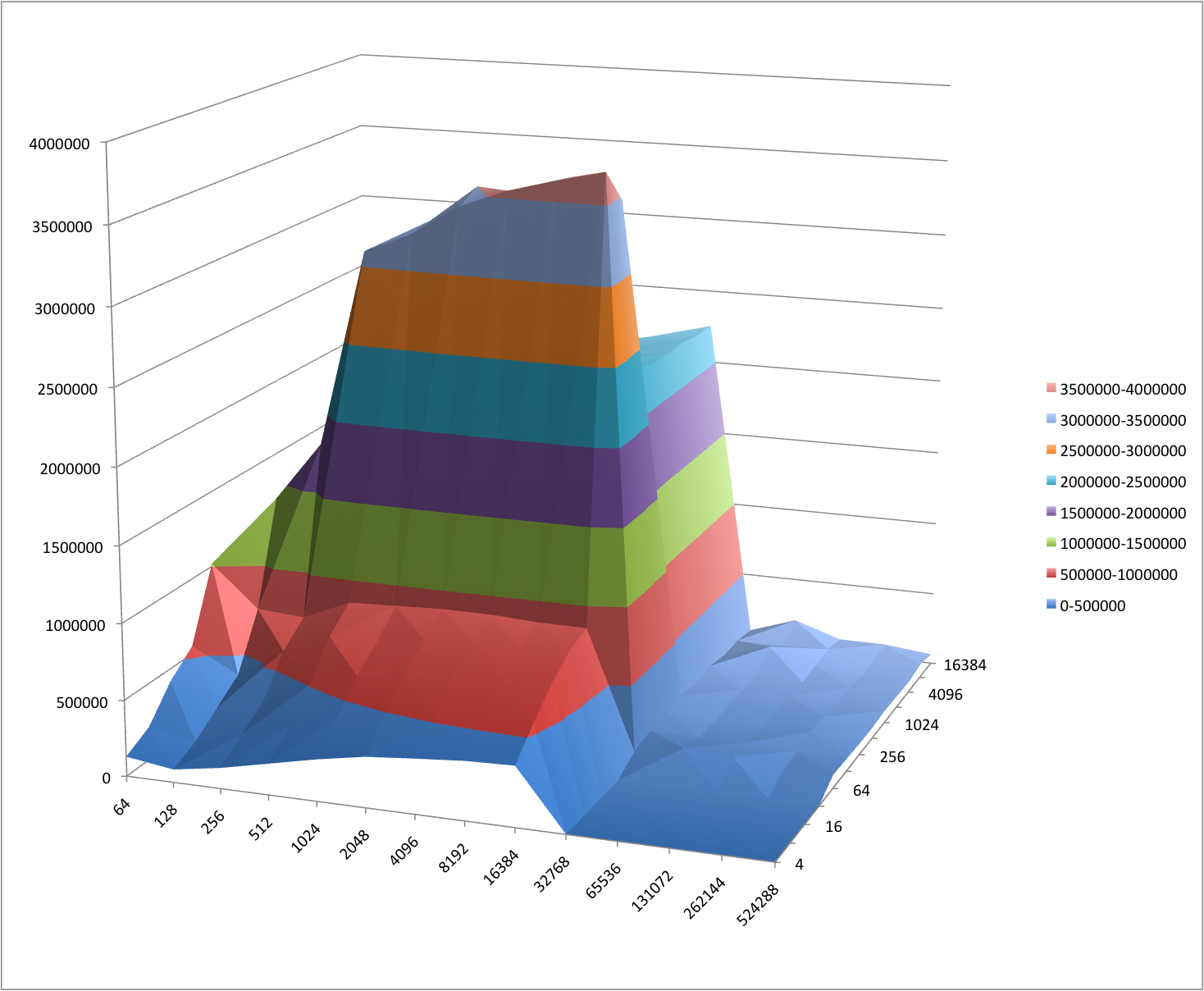

First, we have OpenSolaris’s write performance:

iozone performance on OpenSolaris

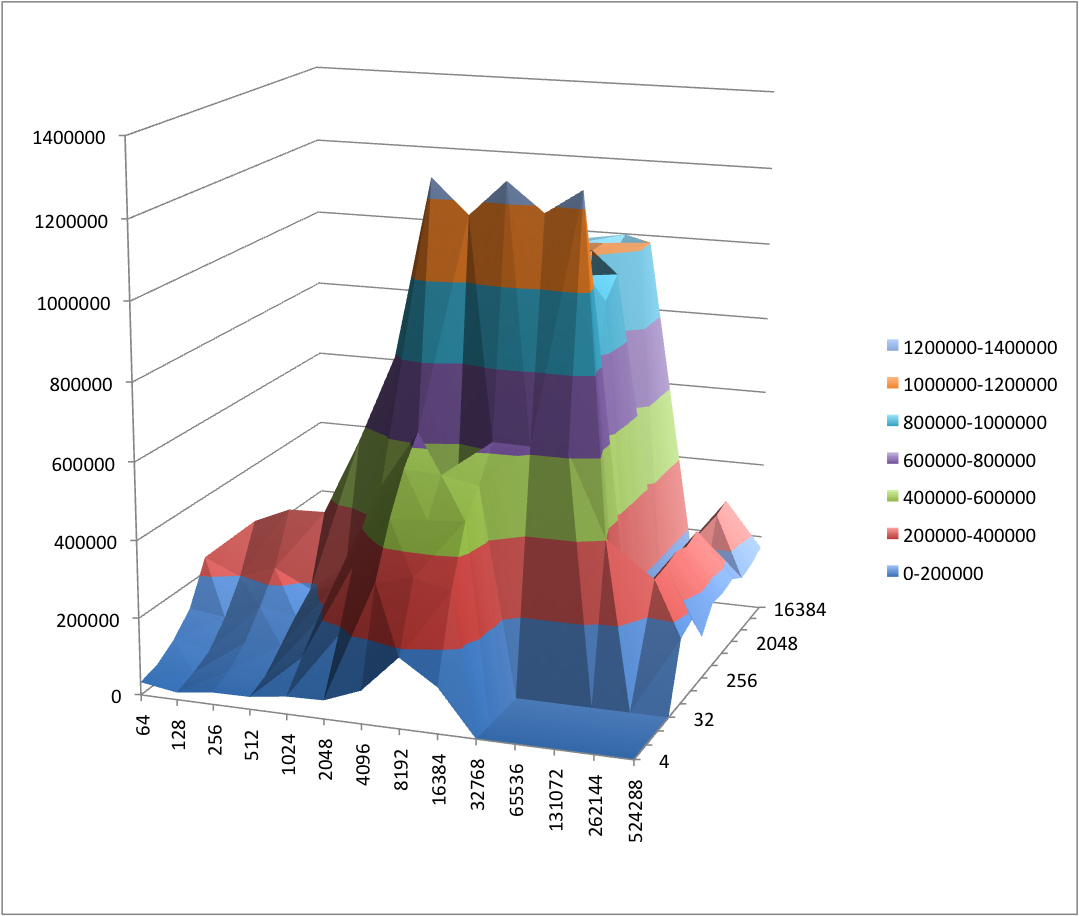

FreeBSD with zfs is noticeably slower, but still an improvement over my existing server - that one only has two drives in a mirrored configuration and oddly enough its write speed is about half of the one on the new server:

iozone performance on FreeBSD with zfs

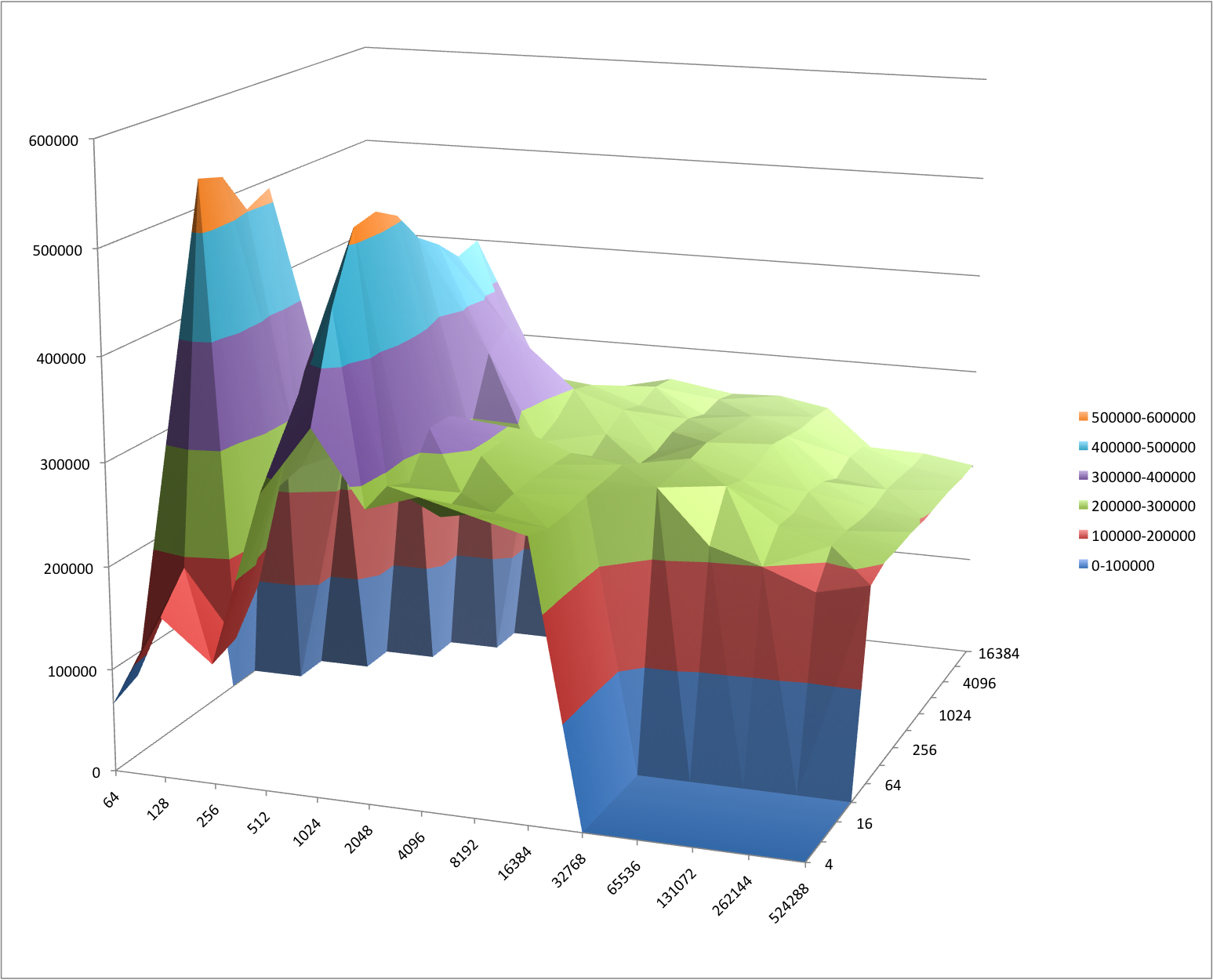

FreeBSD with the geom-based raid is slower but I believe that this is due to the smaller number of disks. With this implementation you need to use an odd number of disks so the fourth disk wasn’t doing anything during those tests. Not surprising that the overall transfer rate came in at roughly 3/4 of the zfs one.

iozone performance on FreeBSD with graid

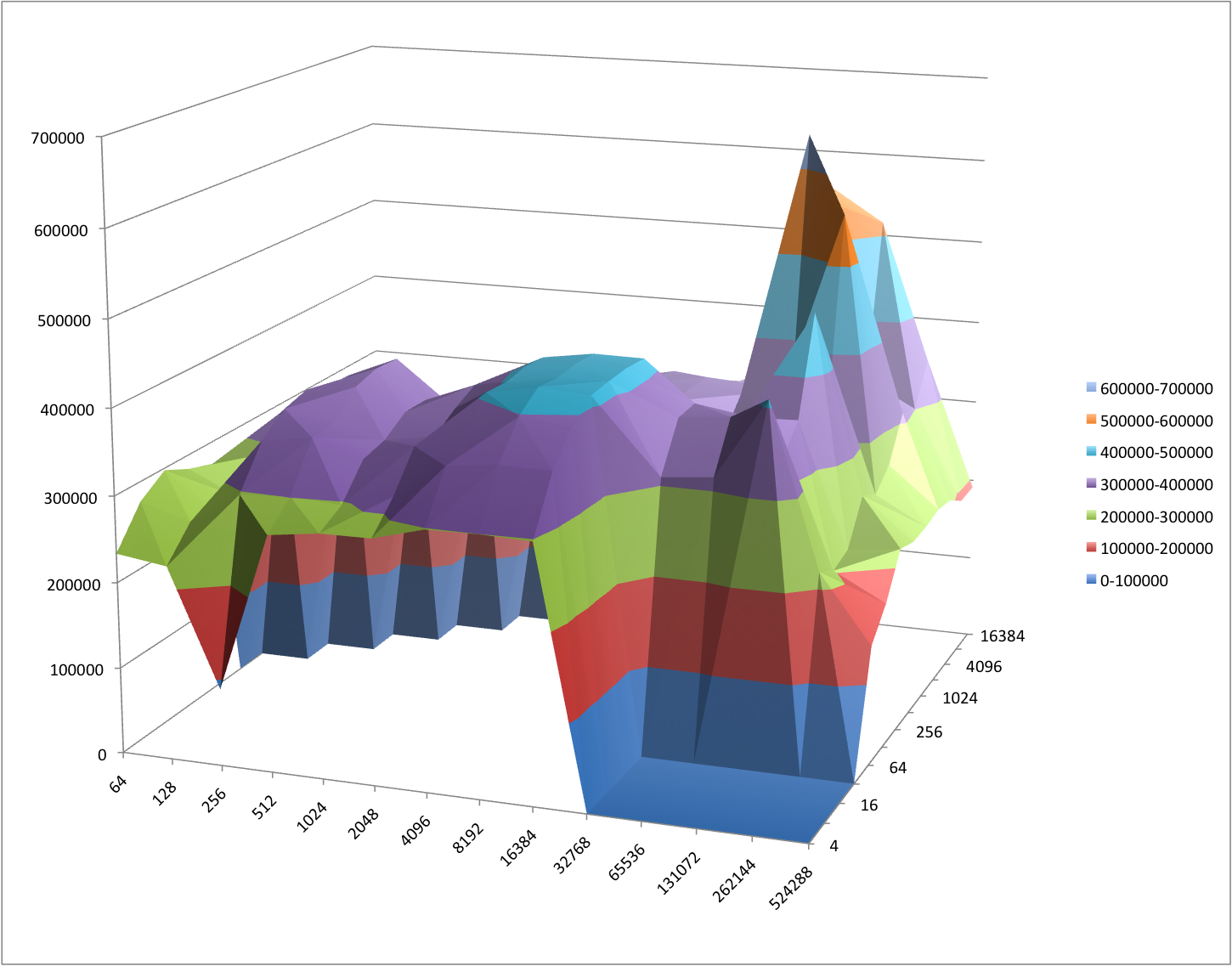

Ubuntu with an mdadm raid array unfortunately brings up the rear. I was really surprised by this as it comes in below the performance of the 3 disk array under FreeBSD:

iozone performance on Ubuntu server

One thing I noticed is that while the four Samsung drives are SATA-300 drives, the disk I reused as a system drive is not. I’m not sure if that does make any difference but I’ll try to source a SATA-300 disk for use as the system disk to check if that makes any difference at all. I’m also not sure if FreeBSD and Linux automatically use the drives command queueing ability. On these comparatively slow but large drives that can make a massive difference. Other than that I’m a little stumped; I’m considering the purchase of the aforementioned Intel network card as that would hopefully allow me to run OpenSolaris but other than that I’m open to suggestions.